Azure vnet and throughput

-

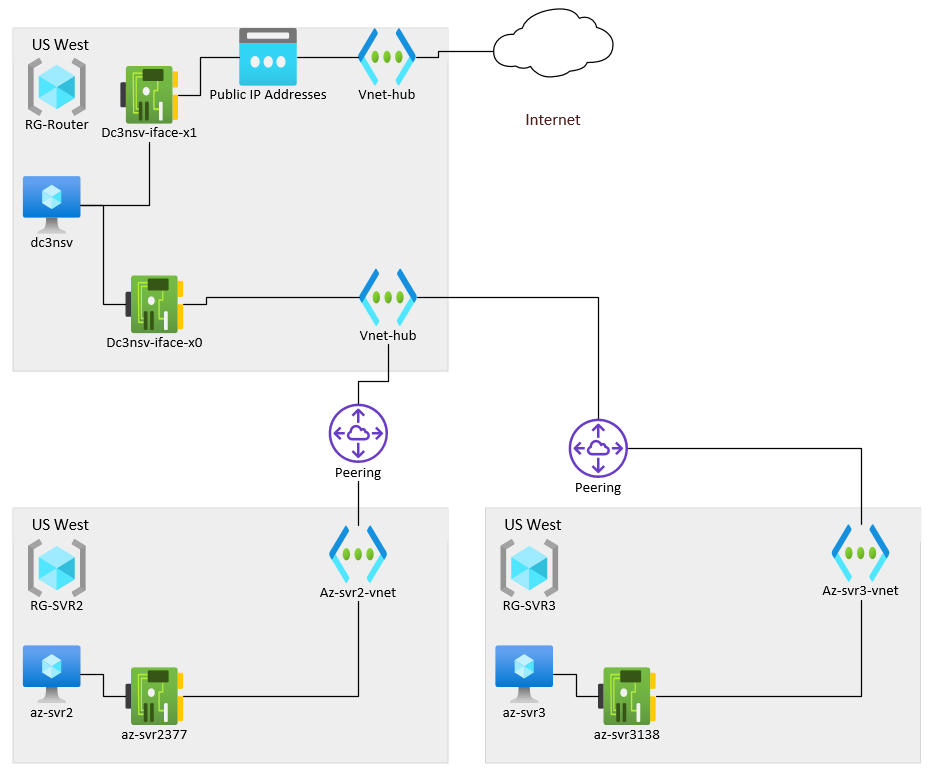

Hi Everyone, I've deployed a lab network in Azure and am experiencing some network throughput issues I didn't expect. Please consider the below network diagram.

In this case, the Resource Group RG-Router is a virtual appliance and the zero route to the internet. All servers run Windows 2019 Datacenter and only have private addresses assigned. az-svr2 communicates with az-svr3 via a "hub" vnet and related peerings. All resources reside in the US West Azure region and the VM sizes are below. For some reason, when transferring data to/from the two Azure US West hosted servers, I am only getting about 300Mbps throughput. All my resource says that these peered vnets should operate at Gbps speeds assuming the VM sizes support it.

Any idea why I might be seeing such poor performance?

az-svr2 Standard_D4s_v4

dc3nsv Standard_D4d_v4

az-svr3 Standard_D4s_v4

-

I'm also interested to hear what answers you get.

-

@Adam-Tyler , I hope all is well. There are several reasons that connectivity speeds may be slow... it would be hard to say for sure without more information and testing....

For instance, you may have issues with the configuration of the rules that back the NSG's, either at the NIC or Subnet levels. There could be Subnet &/or Gateway subnet UDR's defined as well that might be slowing down traffic.

Have you taken a look at the Azure Connectivity Toolkit (Azure CT)? It is a PowerShell module that can help you to diagnose BOTH connectivity & Performance issues.

You can read about it here:

Hope that helps t get you sorted out.

If you still are experiencing issues after poking around and doing some testing, you could try to deploy new NICS and see if that helps. (I do not think it will, as I do not think the NICS are the issue)

If nothing seems to help, then I would suggest opening a support ticket with Microsoft and let them take a look.

Good luck !!

Cheers,

Adam

-

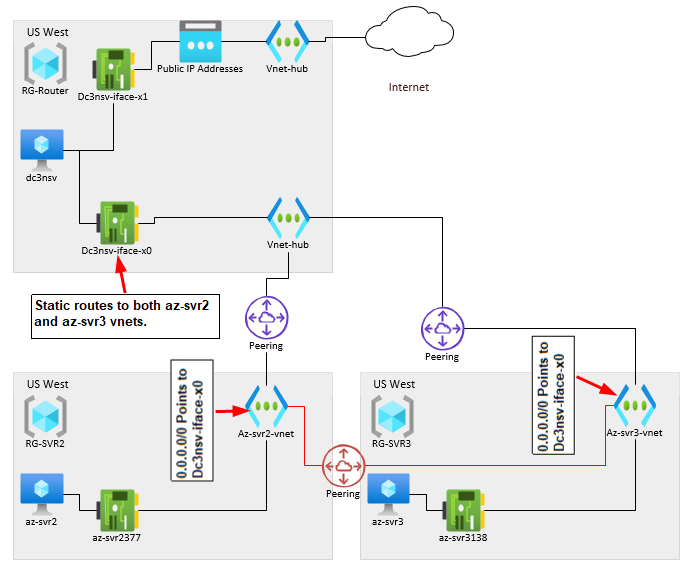

I am still troubleshooting this, but thought I would post an update. I've created a direct peering between the two vnets containing Windows VMs. The throughput when transferring files between az-svr1 and az-svr2 shot up to almost 1.5Gbps. This effectively rules out disk performance and VM sizing as a potential issue. For some reason (I have a theory) when using the hub network, performance drops through the floor.

My guess is that the virtual appliance NAT/firewall that is dc3nsv can't handle the traffic. When the hub network is in use, all traffic has to bounce of this router and back down to the internal networks. This is a SonicWALL NSv270 which on paper should be able to handle much more throughput, however I can't seem to get it to perform a basic internet speed test over about 700 Mbps.. Which, interesting enough, if you cut in half is about 350Mbps. Approximately the throughput I am seeing when transferring files between both internal servers when using hub network.

-

@Adam-Tyler said in Azure vnet and throughput:

I am still troubleshooting this, but thought I would post an update. I've created a direct peering between the two vnets containing Windows VMs. The throughput when transferring files between az-svr1 and az-svr2 shot up to almost 1.5Gbps. This effectively rules out disk performance and VM sizing as a potential issue. For some reason (I have a theory) when using the hub network, performance drops through the floor.

My guess is that the virtual appliance NAT/firewall that is dc3nsv can't handle the traffic. When the hub network is in use, all traffic has to bounce of this router and back down to the internal networks. This is a SonicWALL NSv270 which on paper should be able to handle much more throughput, however I can't seem to get it to perform a basic internet speed test over about 700 Mbps.. Which, interesting enough, if you cut in half is about 350Mbps. Approximately the throughput I am seeing when transferring files between both internal servers when using hub network.

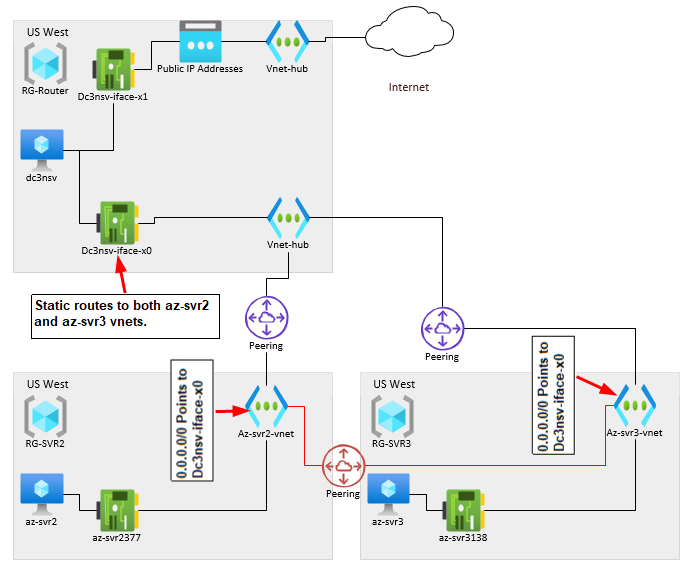

I've gotten to the bottom of this. The virtual appliance used for the firewall was the bottleneck. I threw the SonicWALL out and deployed a pay as you go Fortigate, traffic runs at line speed. No issues.

One word of caution, if you plan to build your networks in Azure like this, Vnet peering costs 1 cent per GB transferred within the same region.

https://azure.microsoft.com/en-us/pricing/details/virtual-network/

Regards,

Adam Tyler